Well….that blew up! 😀

Last week I published a video (which you can watch below if you haven’t seen it) about the evolution of the ROWID.

One particular demo in the video shows how the ROWID for a given row might change simply by issuing an UPDATE statement. Unsurprisingly, Twitter (as Twitter often does) totally lost its mind 😀. People were saying that every application ever built would be going to hell in a handbasket and that this would be the downfall of modern IT etc etc.

Of course, ever since 9i there have been plenty of mechanisms via which the ROWID for a given row might change as a result of some operation, for example, the FLASHBACK TABLE command, ALTER TABLE SHRINK command, partition table row movement, and in later releases, the ability to shuffle data around in an online fashion, aka ALTER TABLE MOVE ONLINE. Thus for over 20 years now the reality has been that if you are using the ROWID as a mechanism to re-query a previously selected row from a table in the database, you always should have been coding your applications to handle the possibility that the ROWID might point to row that no longer exists, or could even be a different row altogether. Of course people have not been doing this, and thus the ensuing panic when my video showed that the ROWID is not as unchanging as people assumed it to be.

But this post is not about the ROWID it is about database design. The discussion on Twitter turned from ROWID’s to the fact that application development tools such as APEX and Forms offer developers the choice of using ROWIDs or primary keys for database table row access. We’ve always recommended to use primary keys over ROWID’s but we do give the flexibility of choice. So now the topic morphed into what the best key should be when building APEX apps.

History has proven time and time again that the moment you commence a discourse on surrogate keys, there will be an equal and opposite reaction from the natural keys camp.

I stress – I ‘m not trying to jump down the throats of the Twitter community here. The conversation was civil and people presented their arguments without profanity or insult. This is why I’m blurring the tweet authors – it is not about who made the tweets, but just that ultimately, we were heading down the path of the same arguments on natural versus surrogate keys, and there is plenty of validity in both sides of the discussion

At this point you’re probably thinking “Hey Connor, will you get to the damn point of the blog post!” 😀 and that’s fair enough. Here’s is my point: I don’t think the issue here is natural keys or surrogate keys. When we talk about “Natural versus Surrogate” I think the issue here is one word:

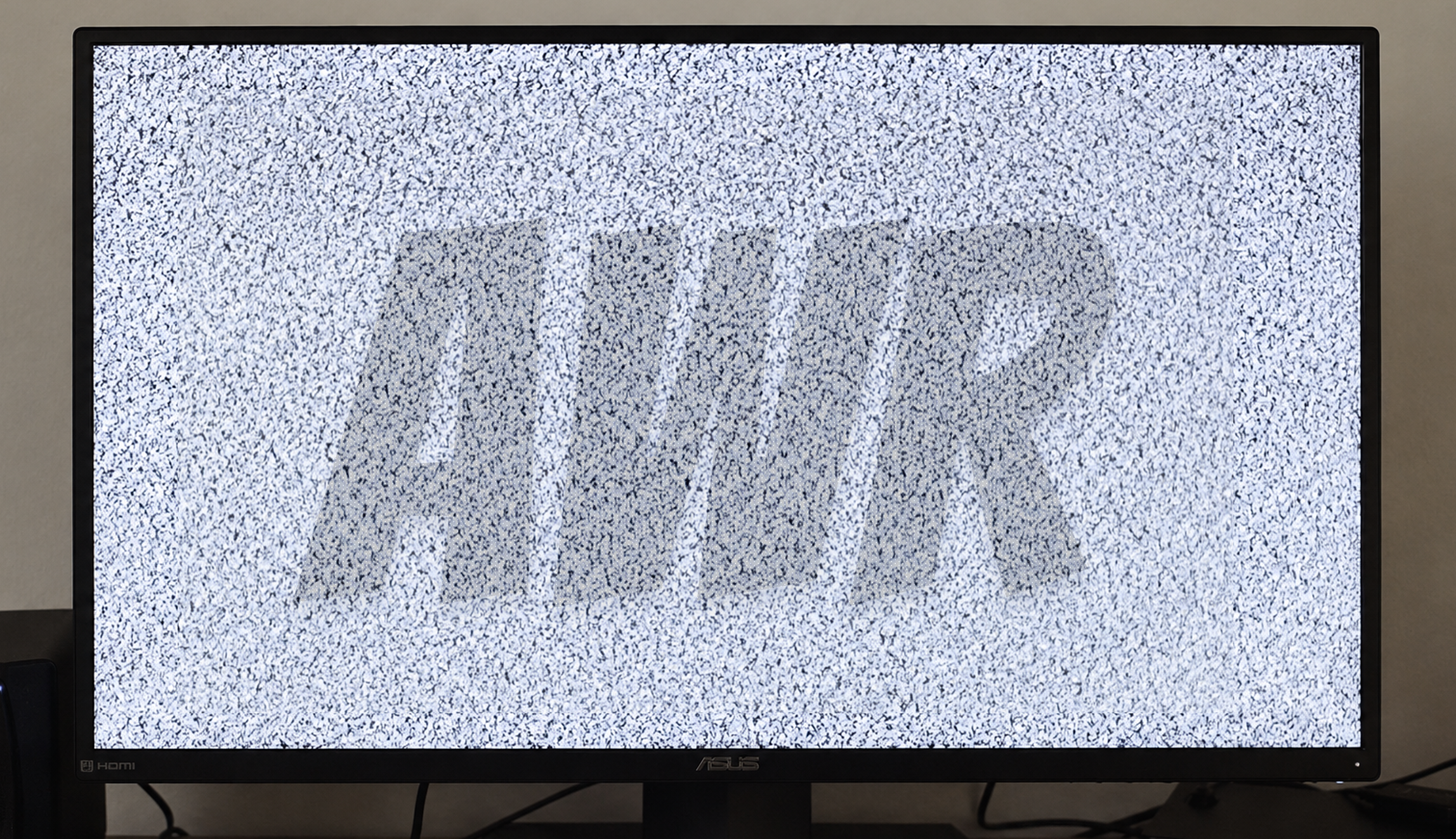

VERSUS.

Why is it always “VERSUS”?

This is where I think we IT practitioners can get lost in the weeds some times. We are all so focused on logical, methodical solutions to problems, that we always want to have one solution. One key to rule them all so to speak.

But I don’t see the need for any such mutual exclusion for how we choose our keys. For example, if I have a GENDER table, I’m totally happy to have a natural key for this, eg

In these more enlightened times, I may need to add additional rows such as Non-binary or Omni-gender, but there’s an excellent chance I can achieve this with a simple one or two letter key. That is going to make things easier not just for developers, but also for business users when they come to do ad-hoc query. The GENDER column on their CUSTOMERS table will have a natural easily understood meaning for when users are building predicates and they might only need to join back to the parent table when they truly need to (for descriptive names etc). I see no downsides to this natural key choice.

Conversely, if in this same database, the sales orders I take from customers have a natural key structure of

which then yields a child table of items for each order:

which then gives me a status history for each item in the order:

then you can safely assume that I’m getting increasingly concerned about

- the size of that key,

- the number of join conditions I’m going to have to write,

- the nightmare data maintenance scenario if the “immutable” key suddenly is no longer so due to a business decision or initial design error. Update cascade anyone? 😀

Thus I would definitely going to explore surrogate keys for these table(s).

“Oh my goodness! Natural and surrogate keys in the same schema?!?!? Is he insane???”

Similarly, this might not be isolated to the just the case of keys getting increasingly longer. If I was designing a table of invoices with an intended primary key of the invoice number, then if that real world invoice number is (say): INV-5D3H77A4DF, then I’m reasonably confident that this is going to fine as a natural key. But if that same business had real world invoice numbers of: PERTH-AUG-2022-174 then alarm bells are going off for me, because it looks obvious that historically this business has added meaning to various parts of the invoice number, and once it has meaning, then as sure as night follows day, there will one day be a request of “We need to change this invoice number from A to B everywhere it appears in the database”

It is for this same reason that a month ago at Kscope, when I had to step in and give Chris Saxon’s talk on Normalisation because sadly he could not travel to the event, I augmented his deck with the following slide, scattered at various places throughout the presentation

Remember that our goal is to build robust, functional, practical databases to sit beneath robust, functional, practical customer applications. It is not to win some fictional “Annual Coolest Tightest Database Design for 2022” award. If that was the case, we’d all be building databases in 6th normal form and throwing ourselves off cliff tops in frustration when we try to achieve anything practical with them.

Let’s ditch the “VERSUS” and just focus on building great databases that serve our customers well. Feel free to use natural and surrogate keys wherever they fit best based on the data you are modelling, the tools you are using, and the customer needs.

Leave a Reply